Abstract

Generative AI reached classrooms faster than any clear policy or pedagogy. Most public guides cite broad values, yet instructors still need step by step plans for lessons, prompt coaching, and academic honesty. The GenAI Use and Ethics Framework meets that need. Its five levels link AI tasks to learning goals, course policy, assessment, student roles, and safeguards. Built for both K12 districts and universities, this paper explains the need, reviews existing guides, give sample scenarios, and outlines next steps for faculty, instructional designers, teachers, and district leaders.

Introduction

AI can speed research, sharpen feedback, and potentially unlock new creative work, but it also raises bias, privacy and honesty risks. Instructors need a plan that grows skill and protect integrity.

Several public guides help, yet none bridge daily practice:

- The California State University ETHICAL Principles AI Framework lists seven campus values such as transparency and human-centered design but gives no staged classroom. (California State University, n.d.).

- The AI Assessment Scale (AIAS) grades assignments at five levels but gives limited tips for lesson delivery or bias checks. (Furze, 2024).

- Inside Higher Ed’s “4 Stages of AI” moves from Regulate to Re-imagine yet skips learning objectives and assessment links. (Maloney, 2023).

- The AI Literacy Framework builds AI literacy for primary and secondary pupils, but university teaching. (European Commission & OECD, 2025).

- Khan Academy’s Responsible AI Framework guides product design, rather than teachers. (Khan Academy, 2025).

None ties concrete AI tasks to course goals, student roles, and built-in safeguards. The GenAI Use and Ethics Framework fills that need with a five-step roadmap for teachers, faculty, instructional designers, and district staff can adopt at once.

Problem Statement

Most district and campus rules simply mark tools as “allowed” or “banned”. They rarely show how to teach prompt writing, check bias, or build skills step by step. Practice varies across the classrooms, confusing students and widening equity gaps. A staged model that blends sound pedagogy with ethics is needed for both school districts and universities.

Framework Overview

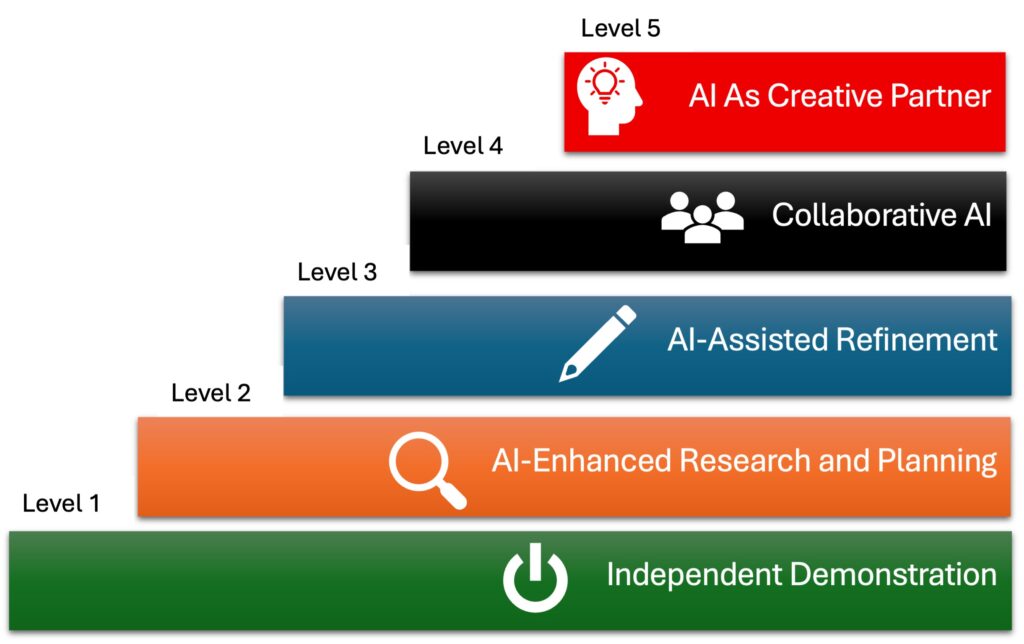

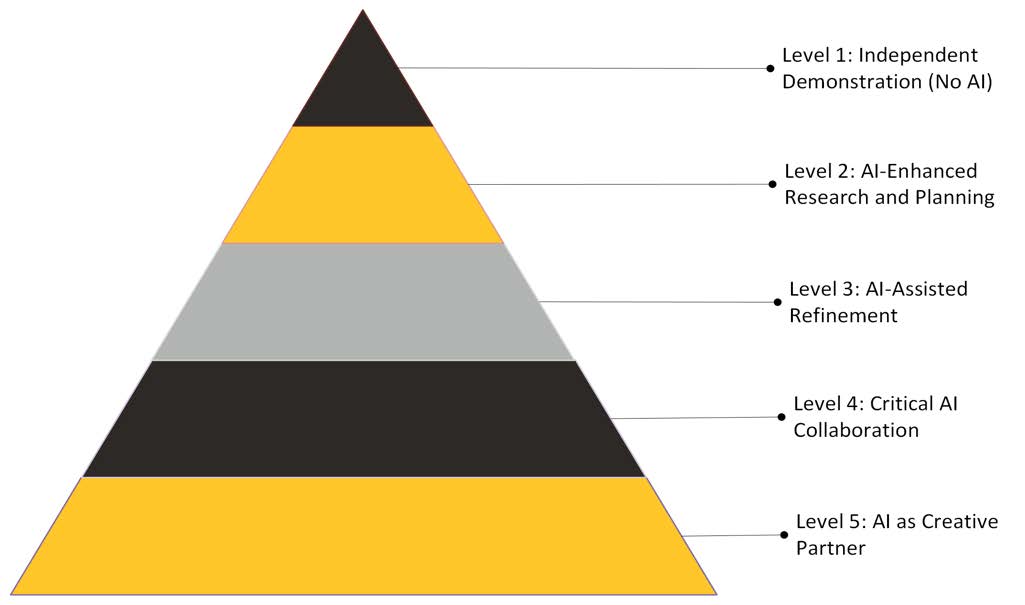

GenAI Use and Ethics Framework

Each level represents a distinct phase of AI integration, with defined components: learning objectives, AI policy, assessment design, student responsibility, and ethical/K-12 considerations.

Level

Core Use

Instructor Focus

Student Role

Key Guardrails

1: Independent Demonstration

No AI

Teach source review

Produce unaided work

Timed, proctored tasks

2: AI-Enhanced Research and Planning

Search and outline

Teach source review

Verify AI facts, cite queries

Keep prompt log

3: AI-Assisted Refinement

Draft improvement

Coach revision cycles

Compare edits justify picks

Screen output for bias

4: Collaborative AI

Real-time cowriting

Guide teamwork

Set human-AI role

Track version history

5: AI as Creative Partner

Build media, code, simulation

Mentor design thinking

Direct AI toward novel goals

Publish an ethics memo

Progression is linear but flexible. An instructor may freeze at a lower level when course outcomes or age groups demand restraint.

Implementation Guidelines

Learning Objectives

Write one objective per task that name the AI level.

Example: “Revise a thesis paragraph with GenAi feedback and justify accepted changes”.

AI Policy Language

Add a short AI statement to the syllabus or district pacing guide. Include allowed tools, required citation style, required bias and privacy checks. Link to FERPA and COPPA rules for K-12 settings.

Assessment Design

At levels 2-4 both student work and AI output. At Level 5 collect an ethic memo with artifact.

Student Responsibility

Students keep a prompt log and reflect on AI influence. Reflection length grows with each level.

Equity and privacy

Provide offline or shared device options where access is uneven. For minors, restrict tools that harvest personal data or sensitive work.

Sample Scenarios

Course Example

Grade Level

Model Level

Description

Grade 8 Social Studies

K-12

Level 2

Students build a brochure on local history. They use a district-approved chatbot for sources, cross check facts, and submit the brochure plus a prompt log.

Introductory Biology Lab

University

Level 2

Students gather post- 2020 papers on enzyme kinetics, verify each source in PubMed, and turn in an annotated bibliography and prompts.

First Year Composition

University

Level 3

Learners place original and AI-revised drafts side by side, mark clarity gains and lost nuances, then choose edits to keep.

High School Multimedia Studio

K-12

Level 4

Teams co-write a podcast script with AI help, track prompts in shared docs, and discuss edits before recordings.

Senior Data Science Capstone

University

Level 5

Teams build an energy-use model with an AI coding assistant, test bias, and submit code plus an ethic memo.

Assessment and Evaluation

Collect three data points each term:

- Performance – Compare grades before and after framework use.

- Engagement – Count AI prompt logs and student reflections.

- Compliance – Track incidents of misconduct related to AI.

District curriculum teams and university committees review results each term.

Implications

Faculty and K-12 Teachers

- Align each assignment with a framework level.

- Teach prompt craft and bias checks as core digital skills.

- Use level progression to build AI competence across a program.

Instructional Designers

- Embed level-based templates in the LMS.

- Pair each level with rubrics that weigh ethical practice.

- Lead workshops that model tasks at every stage.

Institutions and Districts

- Match honor-code or academic honesty language to the five levels.

- Provide logging tools for transparency.

- Fund research on learning gains and equity across levels.

Adoption Roadmap

- Pilot two courses or grade level units per semester, beginning Fall 2025.

- Review data, adjust guardrails, and share findings each term.

- Scale to program level by Fall 2027, with policy and resource updates.

Conclusion

The GenAI Use and Ethics Framework turns broad AI principles into clear stages, concrete tasks, and guardrails that work for both schools and universities. It offers a practical path to use generative AI while guarding honesty, privacy and public trust. A small pilot in select classes may supply the evidence needed for refinement and broader roll-out, guided by close teach teamwork among faculty, teachers, instructional designers, and leaders.

Author’s Note and Disclosure

Ai-assisted tool (ChatGPT and Perplexity.AI) were used during the early stages of brainstorming, outlining and refining ideas for this white paper. Perplexity AI supported comparative analysis across educational frameworks. No AI-generated content was used as-is; all final writing, synthesis, and framework design, reflect the author’s independent professional expertise. All reference were verified.

References

California State University. (n.d.). ETHICAL principles AI framework for higher education. https://genai.calstate.edu/communities/faculty/ethical-and-responsible-use-ai/ethicalprinciples-ai-framework-higher-education

European Commission & Organization for Economic Co-operation and Development. (2025, May 22). Empowering learners for the age of AI: AI literacy framework for primary and secondary education (Draft). https://ailiteracyframework.org/wpcontent/uploads/2025/05/AILitFramework_ReviewDraft.pdf

Furze, L. (2024, August 28). Updating the AI assessment scale. https://leonfurze.com/2024/08/28/updating-the-ai-assessment-scale/ leonfurze.com

Khan Academy. (2025, May 13). Khan Academy’s framework for responsible AI in education. https://blog.khanacademy.org/khan-academys-framework-for-responsible-aiin-education/ blog.khanacademy.org

Maloney, E. J. (2023, April 4). The 4 stages of AI. Inside Higher Ed. https://www.insidehighered.com/blogs/learning-innovation/4-stages-ai

Marcus Green is an Instructional Designer with over 20 years of experience developing learning programs and materials for K–12, corporate, and higher education. He specializes in educational technology, faculty support, and creating engaging digital learning experiences. His current focus is on applying artificial intelligence in instructional design to improve learning outcomes and advance equity in education.